By Cameron Baillie

Upon completing my bachelor’s degree in 2022, I had heard little talk of artificial intelligence (AI) outside my philosophy classrooms. Yet, when I began my master’s programme at LSE last September, AI was being discussed wherever I turned. Clearly, something huge had happened in my year out of academia, about which I knew very little. It seemed that I ought to know more, and sharpish.

OpenAI’s ChatGPT celebrated its first birthday in November 2023, having generated innumerable headlines, concerns, and grandiose speculations worldwide. In the same month, the UK hosted its first global AI safety summit. South Park’s episode Deep Learning was released shortly after, featuring and co-written by AI: Stan’s girlfriend is being arrested at school and “going to jail for cheating”. Her crime? Using ChatGPT in an essay.

(Spoiler!) Stan saves her, declaring, “We can’t blame people who are using ChatGPT. It’s not their fault!”, instead condemning the companies who’ve monetised AI and rapidly made it widely accessible. He then promotes the need for equal access to, control of, and contributing power in generative AI. Sounds ideal – but what’s the reality? More importantly, will any conniving LSE students be “going to jail for cheating” anytime soon?

To better understand the nature of AI, I spoke to LSE Language Coordinator and linguistics lecturer Peter Skrandies. AI is essentially “human-like text that is coherent”, he began. AI are ‘Large Language Models’ (LLMs) which source data, in the form of human language from trillions of instances online. They “create resemblances of human texts, but have no understanding of language”, Skrandies explained, and so only “become meaningful when we read them”. He expressed AI’s potential for students, “redefining how learning looks” for language-learners especially, but there remain distinct “concerns around integrity of assessment”.

In assessment, “reliable detection is the problem and we don’t have answers yet”, Skrandies admitted. He likened the current situation to an ‘arms race’, predicting a scenario where detection softwares compete with rapid AI developments, which will in turn outsmart detectors. Universities’ current Turnitin software is not capable of monitoring for AI use, since “beyond 7-to-9 words, any human sentence is likely unique [and] this is how plagiarism is detected”, Skrandies warned. Consequently, “all universities are scrambling to form policies” around AI, and LSE’s policy has to “evolve and become more sophisticated”.

LSE’s ‘School statement on Artificial Intelligence, Assessment, and Academic Integrity’ was updated less than two months after its release in September 2023, suggesting such a ‘scramble’. It acknowledges the “potential threat generative AI may pose to academic integrity”, LSE’s commitment to academic standards and valuable awards, and their “robust approach to academic misconduct”. But it also recognises “opportunities for both staff and students to explore…to enhance teaching, learning, and assessment”. LSE is keeping communications synchronous with technological developments, and has established a working group to include student voices.

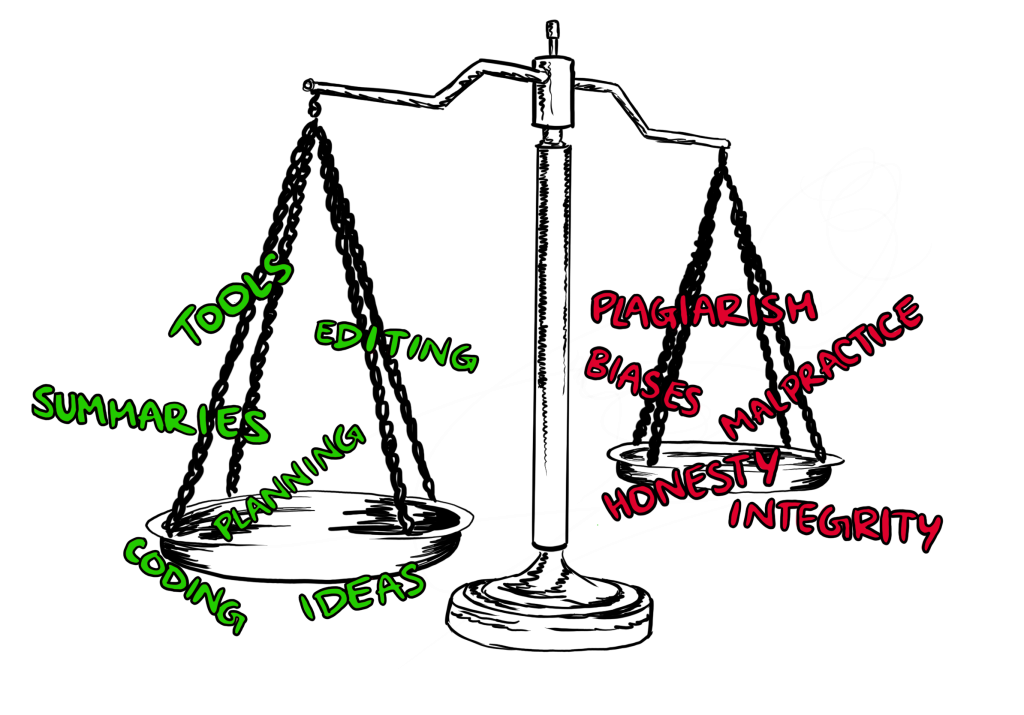

School guidance itself is essentially prohibitive, “unless otherwise specified by individual departments”. It is also effectively federalised, devolving guidance even to individual course convenors. Many departments proactively release specific guidance on AI usage: the Media and Communications department, for example, articulated various legitimate uses for AI, provided any use be stated explicitly. Development students in the Foundational Econometrics course (DV494) are likewise encouraged to use AI for help with coding. Management students are being offered the ‘sector-leading’ Managing Artificial Intelligence (MG4J8) course, which critically assesses big data and AI from “an integrated social-economic-political-technical perspective”. Other departments defer to School policy.

LSE100, the introductory multi-disciplinary first-year course, offers students guidance on AI, prohibiting copying “any text produced by a generative AI tool into a summative assessment” as an originality matter, and any copyright breaches. However, it permits AI use in “literature searching, idea generation and providing proofreading or editing suggestions”, and for coding tools or generation of non-text elements, but all need declaring explicitly.

For students keen to learn more, the online audit course ‘AI for social sciences’ offers introductions to “AI in real-world settings”, its utilisation in “unstructured data such as text” for qualitative researchers, and “social dimensions such as bias”. Another course, ‘Generative AI: Developing your AI Literacy’, is also available, hopefully aiding students in the rapidly-evolving AI-era academy.

To gain a first-year perspective, I spoke to Geography student Irini: she was undertaking A Levels when ChatGPT launched, and claims that school pupils adopted AI quickly, while policies lagged. Now at university, Irini is a student ambassador and sits on LSE’s Student Education Panel. She finds AI useful for tasks like planning, applications, summarising readings, and deconstructing questions, but believes it can “limit one’s parameters”. She worries that it may leave society with “limited critical-thinking and imagination”.

Irini queried the notion that “AI writes essays for you”, and misconceptions that students have malicious intent: rather, they “just use it to make their lives easier”. Most of her first-year friends “use it every day, for everything” – AI is now “indispensable” and “fully integrated into daily life”. Irini remains optimistic about AI’s potential use in learning, but predicts more in-person exams and assessment, which peers generally don’t want. She is less optimistic about career prospects, however, concerned that ‘high-’ and ‘low-skilled jobs’ alike may be replaced.

Formerly-hesitant MSc Political Sociology student Jack first “approached ChatGPT from a Luddite perspective”, with similar trepidation around how AI “may threaten jobs and exacerbate existing inequalities”. Other ‘hippie’ concerns included surveillance expansion, “the race to market AI”, and the half-jokingly alluded-to “impending machine takeover”. It is, Jack felt, not just “a cool new toy” like any other product. Still, he is “being won over”, albeit with a “healthy level of scepticism”. He now uses AI for summarising material, streamlining information, and condensing his own work, to “clarify his own thinking” alongside others’.

Jack still harbours some fears: what might be lost through the “delegation of thinking to the software”, and how his own thinking patterns may change in response. Since masters students are “tasked with their own set of knowledge-creation and cannot just rely on the work of others”, ChatGPT may allow “satisfaction of research requirements without compromising learning”, Jack supposes. He lastly added that prohibitive policies are “anti-intellectual and idealistic”, and that “the more intellectual purity is demanded of us, the more meaning and applicability [are] risked losing”.

I next interviewed LSE Professor Charlie Beckett, director of journalism think-tank Polis and leader of its ‘JournalismAI’ project. His present concern was that universities are generally underprepared for AI’s “potentially destabilising” influence. However, Beckett assuaged, AI is “not a disaster, as such,” for education: it requires addressing promptly, with technology needed to monitor assessed work, but it may present opportunities for assessment, such as normalising written or oral tests. We cannot ignore or ban AI, so we must instead successfully “bring it into the classroom”.

Emma McCoy, Vice President and Pro-Vice-Chancellor in Education at LSE, also believes that universities have a “responsibility to embed AI into the curriculum”, so that students properly understand it. She’s already seen how rapidly AI is changing computer-coding practices. McCoy believes that LSE is well-placed to lead both research and teaching on Ai usage, and that “everything is on the table” regarding Ai in education.

Eric Neumayer, LSE’s interim President and Vice-Chancellor, argues that we must “embrace AI, absolutely…the world has moved on [and] everybody has access now”. AI presents interesting research opportunities, he believes, and benefits could include immediate exam feedback. Assessment is the “real challenge”, however: we cannot fight technological progress, but he remains optimistic that universities will prevail. Neumayer is sure that “human intelligence remains key”, and won’t be trumped. We must “come to terms with what [counts] as intelligence”, as we aim to “make sense of a chaotic world” with this new technology, he professed.

On top of these issues, universities aiming for carbon-neutrality, as LSE claims to, will also have to seriously confront the significant environmental costs of AI computing. But some fundamental problems at hand are not entirely new. The first and most obvious regards integrity, honesty, and transparency: any AI usage must be stated as such (though, at present, this effectively falls to individuals). Plagiarism, dishonesty, and malpractice have always plagued academia, but AI clearly broadens the scope.

Another issue concerns systemic biases against second-language English (ESL) speakers. Their writing might be more frequently flagged as AI-generated, according to Skrandies, and they could feel greater pressure to use AI in competition with anglophone peers. As such, the invariably Anglo-centric academic world may further disadvantage ESL students.

Skrandies elucidated an increasingly visible third problem: divergence between commodified academic certifications and grading systems, and “true learning” objectives. There is “conflict between the idea that we are individuals who can be objectively graded”, Skrandies suggested, which is “counterproductive to the idea of learning”. Yet academia, especially when costing students with such massive debts, is “tied to the system of grading”. AI might yet force complete rethinking of this ubiquitous metric.

Ultimately, as Neumayer alluded to, is the matter of what exactly counts as one’s ‘own work’. Where exactly should lines be drawn on originality? Stealing someone else’s remarks from a seminar for an essay? Mimicking a lecturer’s argument, or private conversations? Perhaps using YouTube without citing videos? If one’s parents were university-educated, that too would likely influence students’ thoughts and written work. No woman is an island, after all: academia is based in the collective production of ideas and debates through centuries of open dialogue. We just need the measures to ensure that this endeavour is not lost to AI.

Nevertheless, as Isaac Newton famously said, “if I have seen further [than others], it is by standing on the shoulders of giants”. Thanks to AI, those giants have now grown far greater. It will be the fortune or misfortune of younger generations, the AI-‘guinea pigs’, as Professor Beckett jovially labelled us. With careful guidance, healthy imagination, and aspiring attitudes, we may still hope that their shoulders allow us to see further than any generation before.

Illustration by Francesca Corno